Computex 2023: Nvidia Keynote

OUR COMPUTEX 2023 COVERAGE IS MADE POSSIBLE WITH THE SUPPORT OF: ASRock , BeQuiet and MSI.

True to tradition, it was a large organized event with press, investors and guests from all over the world. The event was part of the official Computex program. Our expectations were not exactly about to be blown in relation to gaming-related news, as Nvidia had already launched their RTX 4060 card prior to Computex.

So the expectations for new launches were almost non-existent. As one could almost have expected cf the trend of the time, the presentation started not surprisingly with AI. It is an area where Nvidia is very current and the contributing factor to the fact that Nvidia recently presented a record account and subsequent jump in their share value.

"I am AI" was the tagline that remained after an intro video about all the different methods AI can be used. All to the tune of AI composed music played by the London Symphony Orcestra. Very grand, just the way I imagine Jensen prefers it.

Although our gaming hopes were small there was a bit to catch up after all. jensen spent some time talking about the leap for Ray Tracing following Nvidia's launch of their RT Cores. The thread was tied back to AI and how it is used with RT Cores to create the graphical elements faster.

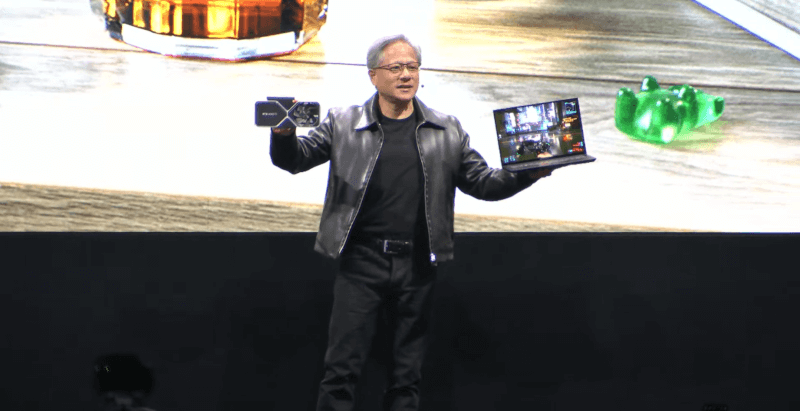

Here he also brought a 14" laptop on stage together with an RTX 4060 graphics card. Here, of course, the story was that the small laptop with an RTX 4060 and AI (DLSS) could handle better graphics performance in the small package and beat the performance of the consoles.

Nvidia ACE

From here, Jensen moved on to their new Nvidia ACE, which was the most exciting part of the presentation in relation to our interests. Nvidia ACE is a tool for AI-generated Avatars for e.g. games. It is an interaction between several different technologies where both voices, animations and the interaction with the player are dynamically generated by AI. The idea is that via Nvidia ACE you can generate characters for your games faster and easier.

They showed a demo made in Unreal Engine 5, which reportedly used Nvidia ACE for a game-like scene where the player walked up to an NPC and had a conversation with him. The conversation was not scripted in advance. The player simply spoke to the character and the character responded, via AI, based on a backstory that he was coded with.

The system simultaneously disturbed all animations, facial expressions and the like. based on the conversation it had and the answers it gave.

It was a slightly stiff experience, but if the prerequisites that Jensen laid down for the demo were correct, then it seems promising. Just the idea that you can choose your own dialogue in a game and an NPC then responds dynamically, based on the knowledge it is programmed to have, sounds wild.

Looking at the possibilities with services such as Chat GPT, it does not seem unlikely that it could be integrated into games as part of NPCs. With the current development in the field, it will be exciting to see how it can be integrated into games and potentially provide almost infinite dialogue possibilities in games.

After the small gaming bit, however, the rest of Nvidia's keynote was dedicated to hardcore server discussion. It is of course not so surprising, since it is in that segment that the vast majority of Nvidia's income comes. However, it was not something that we normally deal with here at Tweak, as it is systems for Cloud computing and data centers for companies such as Google, Meta and Microsoft. So this is where I will round off the coverage for this time. If you are interested in the heavier server stuff from Nvidia, Jensen Huang's entire Keynote can be found on YouTube here.

Latest event

-

22 Augevent

-

04 Julevent

Everyone is “unavailable” in the open office.

-

26 Junevent

Google hardware event den 13. august

-

19 Junevent

Netflix opens entertainment complexes in the Unite

-

15 Mayevent

Google I/O 2024: Circle for Search improves

-

15 Mayevent

Google I/O 2024: Gemini AI expands generative AI

-

15 Mayevent

Google I/O 2024: AI Overviews

-

15 Mayevent

Google I/O 2024: Project Astra

Most read event

Latest event

-

22 Augevent

ASUS ROG news at Gamescom 2024

-

04 Julevent

Everyone is “unavailable” in the open office.

-

26 Junevent

Google hardware event den 13. august

-

19 Junevent

Netflix opens entertainment complexes in the Unite

-

15 Mayevent

Google I/O 2024: Circle for Search improves

-

15 Mayevent

Google I/O 2024: Gemini AI expands generative AI

-

15 Mayevent

Google I/O 2024: AI Overviews

-

15 Mayevent

Google I/O 2024: Project Astra