Nvidia's Blackwell will be an expensive affair

.jpg)

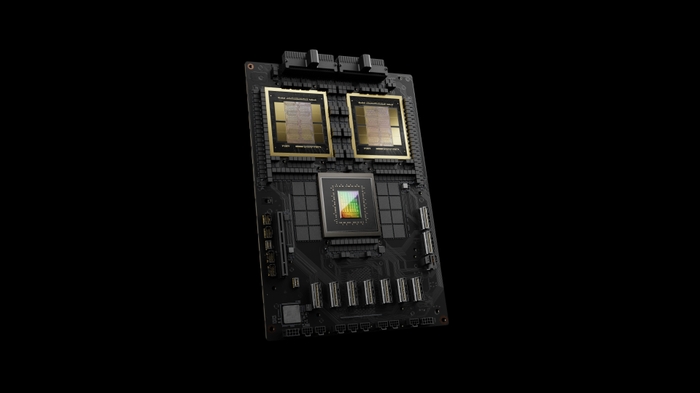

According to analysts from HSBC, Nvidia's Blackwell GPUs for AI applications could be more expensive than the company's Hopper-based processors. According to @firstadopter, a senior writer at Barron's, a single GB200 superchip (CPU+GPUs) can cost up to $70,000. However, Nvidia may be more inclined to sell servers based on the Blackwell GPUs instead of selling chips separately, especially when the B200 NVL72 servers are expected to cost up to $3 million each.

HSBC estimates that Nvidia's 'entry' B100 GPU will have an average retail price of between $30,000 and $35,000, which is within the price range of Nvidia's H100. The more powerful GB200, which combines a single Grace CPU with two B200 GPUs, is expected to cost between $60,000 and $70,000. It may actually end up costing a bit more than that, as these are only analyst estimates.

Servers based on Nvidia's design will be much more expensive. The Nvidia GB200 NVL36 with 36 GB200 Superchips could be sold for an average of $1.8 million, while the Nvidia GB200 NVL72 with 72 GB200 Superchips could fetch around $3 million, according to the alleged HSBC figures. When Nvidia's CEO Jensen presented the Blackwell data center chips at this year's GTC 2024, it was clear that the intention is to move entire racks of servers. Jensen repeatedly stated that when he thinks of a GPU, he now envisions the NVL72 rack.

The entire setup integrates via high-bandwidth connections to act as a massive GPU, providing a total of 13,824 GB of VRAM - a crucial factor in training ever-larger LLMs. By selling whole systems instead of separate GPU/Superchips, Nvidia can absorb some of the premium earned by system integrators, which will increase the company's revenue and profitability. Based on Nvidia's competitors AMD and Intel slowly gaining a foothold with their AI processors, Nvidia can certainly sell its AI processors at a huge premium.

Therefore, the price estimates allegedly prepared by HSBC are not particularly surprising. It is also important to highlight the differences between the H200 and the GB200. The H200 is already priced up to $40,000 for individual GPUs. The GB200 will effectively quadruple the number of GPUs (four silicon dies, two per B200), as well as add the CPU and the large PCB for the so-called Superchip. Raw calculation for a single GB200 Superchip is 5 petaflops FP16 (10 petaflops with sparsity), compared to 1/2 petaflops (dense/sparse) on the H200.

That's about five times more computation, not even considering other architectural upgrades. It should be remembered that the actual prices for data center-grade hardware always depend on the individual contracts, based on the amount of hardware ordered and other negotiations. Therefore, these estimated numbers should be taken with a grain of salt. Bigger buyers like Amazon and Microsoft are likely to get big discounts, while smaller customers could end up paying even higher prices than those reported by HSBC.

Latest processor - cpu

-

31 Octprocessor - cpu

-

16 Sepprocessor - cpu

AMD Ryzen AI 7 PRO 360 spotted

-

04 Sepprocessor - cpu

Intel scores big AI chip customer

-

04 Sepprocessor - cpu

Exclusively-Intel manufacturing store drawers

-

29 Augprocessor - cpu

Big performance boost for Ryzen CPUs

-

28 Augprocessor - cpu

Intel shares could fall in battle with TSMC and NV

-

28 Augprocessor - cpu

AMD is claimed to have been hacked

-

27 Augprocessor - cpu

Intel presents Lunar Lake, Xeon 6, Guadi 3 chips

Most read processor - cpu

Latest processor - cpu

-

31 Octprocessor - cpu

AMD will launch the Ryzen 7 9800X3D on November 7

-

16 Sepprocessor - cpu

AMD Ryzen AI 7 PRO 360 spotted

-

04 Sepprocessor - cpu

Intel scores big AI chip customer

-

04 Sepprocessor - cpu

Exclusively-Intel manufacturing store drawers

-

29 Augprocessor - cpu

Big performance boost for Ryzen CPUs

-

28 Augprocessor - cpu

Intel shares could fall in battle with TSMC and NV

-

28 Augprocessor - cpu

AMD is claimed to have been hacked

-

27 Augprocessor - cpu

Intel presents Lunar Lake, Xeon 6, Guadi 3 chips